Home » H200

The current paradigm of artificial intelligence infrastructure is defined by a rapid generational churn that has effectively collapsed the traditional fiscal logic of data center management. As enterprises accelerate their transition to the NVIDIA Blackwell architecture, the imperative to offload previous-generation Ampere and Hopper assets has shifted from a routine hardware refresh to a critical…

Read MoreDoes GPU VRAM Pose a Security Risk? What Enterprises Need to Know Before SellingIn the rapidly evolving landscape of AI infrastructure, the lifecycle management of High-Performance Computing (HPC) assets has moved from the basement to the boardroom. For the modern CTO, decommissioning a cluster of NVIDIA H100s or A100s is no longer a simple logistics…

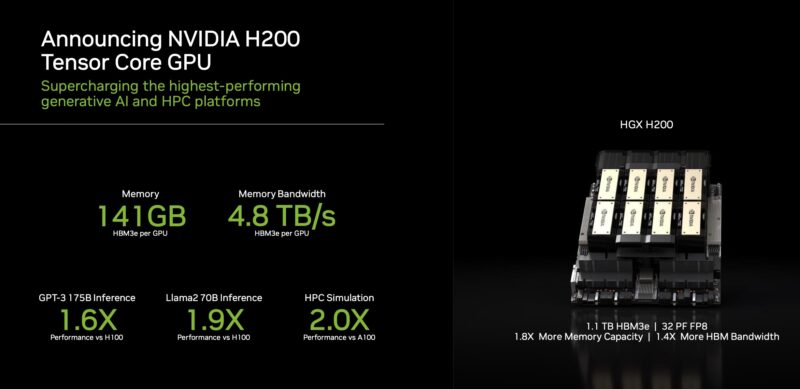

Read More– Upgrading H100 to H200 Semiconductor giant Nvidia introduced its latest artificial intelligence chip, the H200, designed to support training and deployment across various AI models. An upgraded version of the H100 chip, the H200 boasts 141GB of memory, focusing on enhancing “inference” tasks. The chip demonstrates a notable improvement of 1.4 to 1.9 times…

Read More